LHC Scientists Finally Detect Most Favored Higgs Decay

- Details

- Published: Tuesday, August 28 2018 10:49

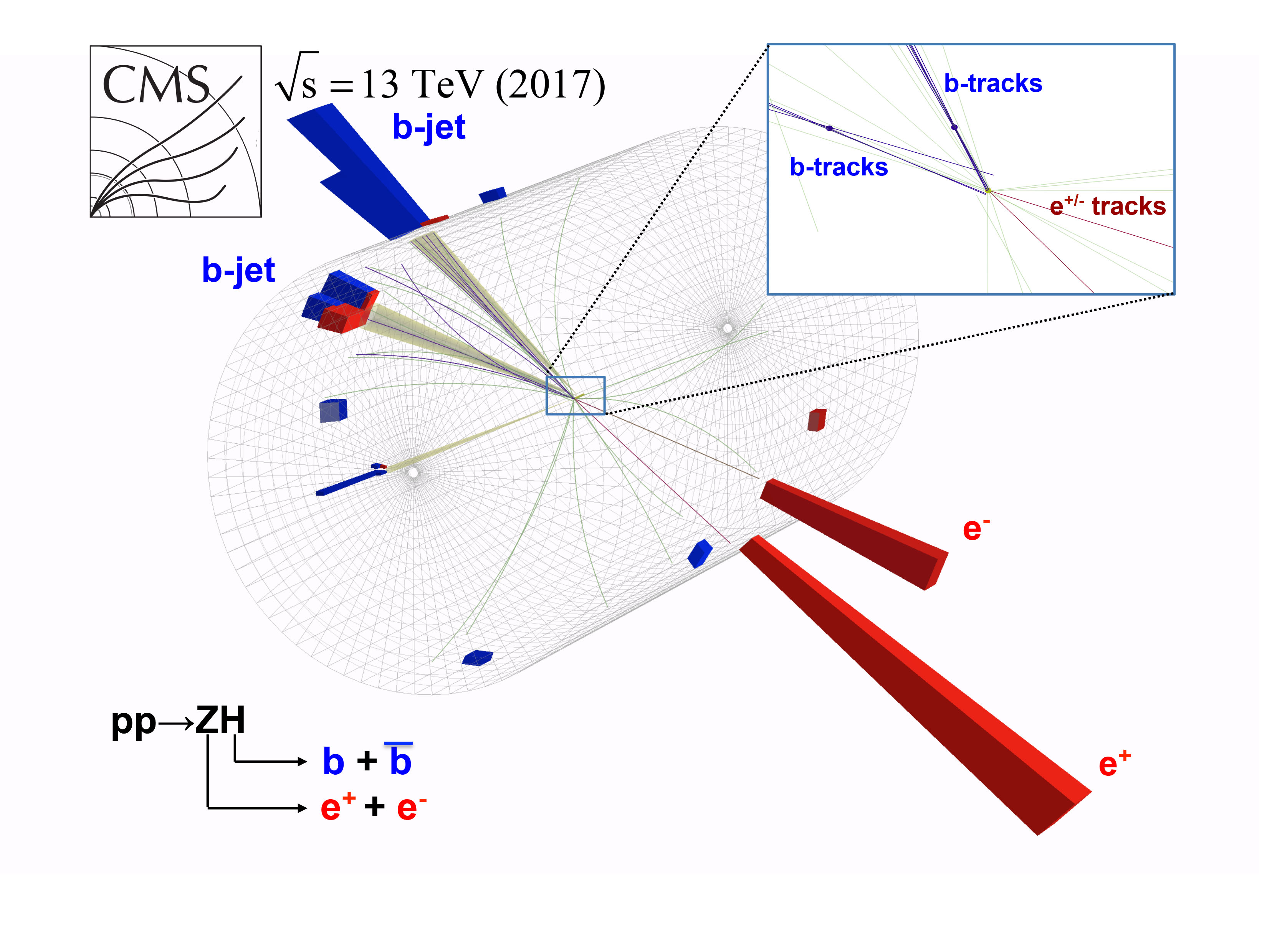

Candidate event showing the associated production of a Higgs boson and a Z boson, with the subsequent decay of the Higgs boson to a bottom quark and its antiparticle.

Candidate event showing the associated production of a Higgs boson and a Z boson, with the subsequent decay of the Higgs boson to a bottom quark and its antiparticle.

Scientists now know the fate of the vast majority of all Higgs bosons produced in the LHC.

Today at CERN, the Large Hadron Collider experiments ATLAS and CMS jointly announced the discovery of the Higgs boson transforming into bottom quarks as it decays. This is predicted to be the most common way for Higgs bosons to decay, yet was a difficult signal to isolate because it closely mimics ordinary background processes. This new discovery is a big step forward in the quest to understand how the Higgs enables fundamental particles to acquire mass.

After several years of refining their techniques and gradually incorporating more data, both experiments finally saw evidence of the Higgs decaying to bottom quarks that exceeds the 5-sigma threshold of statistical significance typically required to claim a discovery. Both teams found their results were consistent with predictions based on the Standard Model. UMD professors Alberto Belloni, Drew Baden, Sarah Eno, Nick Hadley and Andris Skuja are members of the CMS collaboration.

Higgs bosons are only produced in roughly one out of a billion LHC collisions and live only one-septillionth of a second before their energy is converted into a cascade of other particles. Because it is impossible to see Higgs bosons directly, scientists use these secondary particles to study the Higgs’ properties. Since its discovery in 2012, scientists have been able to identify only about thirty percent of all the predicted Higgs boson decays, while its decays to bottom quarks, which should occur sixty percent of the time, had not yet been observed.

“The Higgs boson is an integral component of our universe and theorized to give all fundamental particles their mass,” said Alberto Belloni of the University of Maryland. “But previously we had only directly seen the Higgs couplings to the tau lepton, and the W and Z bosons. Now we have seen the decay of the Higgs to a quark-antiquark pair. This measurement shows for the first time that the Higgs gives mass to a quark.”

The Higgs field is theorized to interact with all massive particles in the Standard Model, the best theory scientists have to explain the behavior of subatomic particles. But many scientists suspect that the Higgs could also interact with massive particles outside the Standard Model, such as dark matter. By finding and mapping the Higgs bosons’ interactions with known particles, scientists can simultaneously probe for new phenomena.

The next step is to increase the precision of these measurements so that scientists can study this decay mode with a much greater resolution and explore what secrets the Higgs boson might be hiding.

Further information:

ATLAS: https://atlas.cern/updates/press-statement/observation-higgs-boson-decay-pair-bottom-quarks

CMS: http://cms.cern/higgs-observed-decaying-b-quarks-submitted