Researchers at the University of Maryland (UMD) have trained a small hybrid quantum computer to reproduce the features in a particular set of images.

The result, which was published Oct. 18, 2019 in the journal Science Advances, is among the first demonstrations of quantum hardware teaming up with conventional computing power—in this case to do generative modeling, a machine learning task in which a computer learns to mimic the structure of a given dataset and generate examples that capture the essential character of the data.

“We combined one of the highest performance quantum computers with one of the most powerful AI programs—over the internet—to form a unique kind of hybrid machine,” says Joint Quantum Institute (JQI) Fellow Norbert Linke, an assistant professor of physics at UMD and a co-author of the new paper.

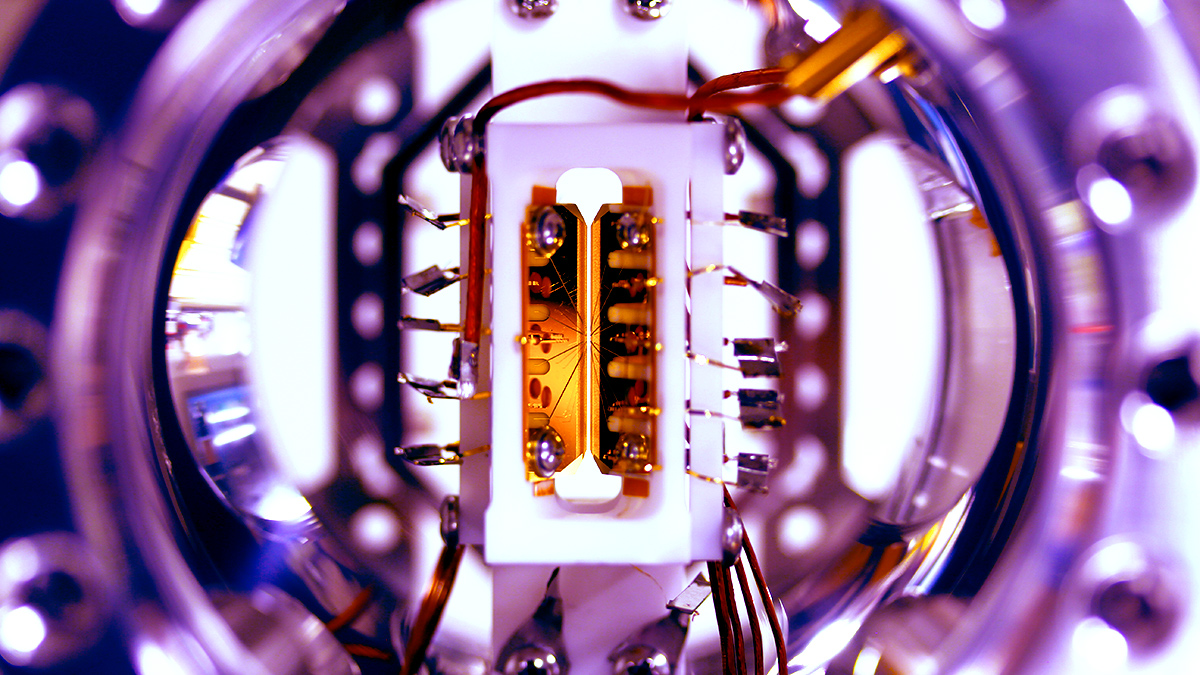

The researchers used four trapped atomic ions for the quantum half of their hybrid computer, with each ion representing a quantum bit, or qubit—the basic unit of information in a quantum computer. To manipulate the qubits, researchers punch commands into an ordinary computer, which interprets them and orchestrates a sequence of laser pulses that zap the qubits. Close-up photo of an ion trap. Credit: S. Debnath and E. Edwards/JQI

Close-up photo of an ion trap. Credit: S. Debnath and E. Edwards/JQI

The UMD quantum computer is fully programmable, with connections between every pair of qubits. “We can implement any quantum function by executing a standard set of gates between the qubits,” says JQI and Joint Center for Quantum Information and Computer Science (QuICS) Fellow Christopher Monroe, a physics professor at UMD who was also a co-author of the new paper. “We just needed to optimize the parameters of each gate to train our machine learning algorithm. This is how quantum optimization works.”

Monroe, Linke and their colleagues trained their computer to produce an output that matched the “bars-and-stripes” set, a collection of images with blocks of color arranged vertically or horizontally to look like bars or stripes—a standard dataset in generative modeling because of its simplicity.

“Machine learning is generally categorized into two types,” says Daiwei Zhu, the lead author of the paper and a graduate student in physics at JQI. “One enables you to tell whether something is a cat or dog, and the other lets you generate an image of a cat or dog. We’re performing a scaled-back version of the latter task.”

Turning the hybrid system into a properly trained generative model meant finding the laser sequence that would turn a simple input state into an output capable of capturing the patterns in the bars-and-stripes set—something that qubits could do more efficiently than regular bits. “In essence, the power of this lies in the nature of quantum superposition,” says Zhu, referring to the ability of qubits to store multiple states—in this case, the entire set of bars-and-stripes images with four pixels—simultaneously.

Through a series of iterative steps, the researchers attempted to nudge the output of their hybrid computer closer and closer to the quantum bars-and-stripes state. They began by preparing the input qubits, subjecting them to a random sequence of laser pulses and measuring the resulting output. Those measurement results were then fed to a conventional, or “classical,” computer, which crunched the numbers and suggested adjustments to the laser pulses to make the output look more like the bars-and-stripes state.

By adjusting the laser parameters and repeating the procedure, the team could test whether the output eventually converged on the desired quantum state. They found that in some cases it did, and in some cases it didn’t.

The researchers studied the convergence using two different patterns of connectivity between qubits. In one, each qubit was able to interact with all the others, a situation that the team called all-to-all connectivity. In a second, a central qubit interacted with the other three, none of which interacted directly with one another. They called this star connectivity. (This was an artificial constraint, as the four ions are naturally able to interact in the all-to-all fashion. But it could be relevant to experiments with a larger number of ions.)

The all-to-all interactions produced states closer to bars-and-stripes after training short sequences of pulses. But the experimenters had another setting to play with: They also studied the performance of two different number crunching methods used on the conventional half of the hybrid computer.

One method, called particle swarm optimization, worked well when all-to-all interactions were available, but it failed to converge on the bars-and-stripes output for star connectivity. A second method, which was suggested by three researchers at the Oxford, UK AI company Mind Foundry Limited, proved much more successful across the board.

The second method, called Bayesian optimization, was made available over the internet, which enabled the researchers to train sequences of laser pulses that could produce the bars-and-stripes state for both all-to-all and star connectivity. Not only that, but it significantly reduced the number of steps in the iterative training process, effectively cutting in half the time it took to converge on the correct output.

“What our experiment shows is that a quantum-classical hybrid machine, while in principle more powerful than either of the components individually, still needs the right classical piece to work,” says Linke. “Using these schemes to solve problems in chemistry or logistics will require both a boost in quantum computer performance and tailored classical optimization strategies.”

Story by Chris Cesare

In addition to Linke, Monroe and Zhu, co-authors of the research paper include University College London computer science student Marcello Benedetti; JQI physics graduate students Nhung Hong Nguyen, Cinthia Huerta Alderete and Laird Egan and recent JQI Ph.D. graduate Kevin Landsman; Zapata Computing scientist Alejandro Perdomo-Ortiz; Mind Foundry Limited scientists Nathan Korda, Alistair Garfoot and Charles Brecque; and Central Connecticut State University Mathematical Sciences Professor Oscar Perdomo.

Christopher Monroe This email address is being protected from spambots. You need JavaScript enabled to view it.

Chris Cesare This email address is being protected from spambots. You need JavaScript enabled to view it.