- Details

-

Published: Monday, April 21 2025 01:00

Despite existing everywhere, the quantum world is a foreign place where many of the rules of daily life don’t apply. Quantum objects jump through solid walls; quantum entanglement connects the fates of particles no matter how far they are separated; and quantum objects may behave like waves in one part of an experiment and then, moments later, appear to be particles.

These quantum peculiarities play out at such a small scale that we don’t usually notice them without specialized equipment. But in superfluids, and some other quantum materials, uncanny behaviors can appear at a human scale (although only in extremely cold and carefully controlled environments). In a superfluid, millions of atoms or more can come together and share the same quantum state.

Acting together as a coordinated quantum object, the atoms in superfluids break the rules of normal fluids such as water, air and everything else that flows and changes shape to fill spaces. When liquid helium turns into a superfluid it suddenly gains the ability to climb vertical walls and escape airtight containers. And all superfluids share the ability to flow without friction.

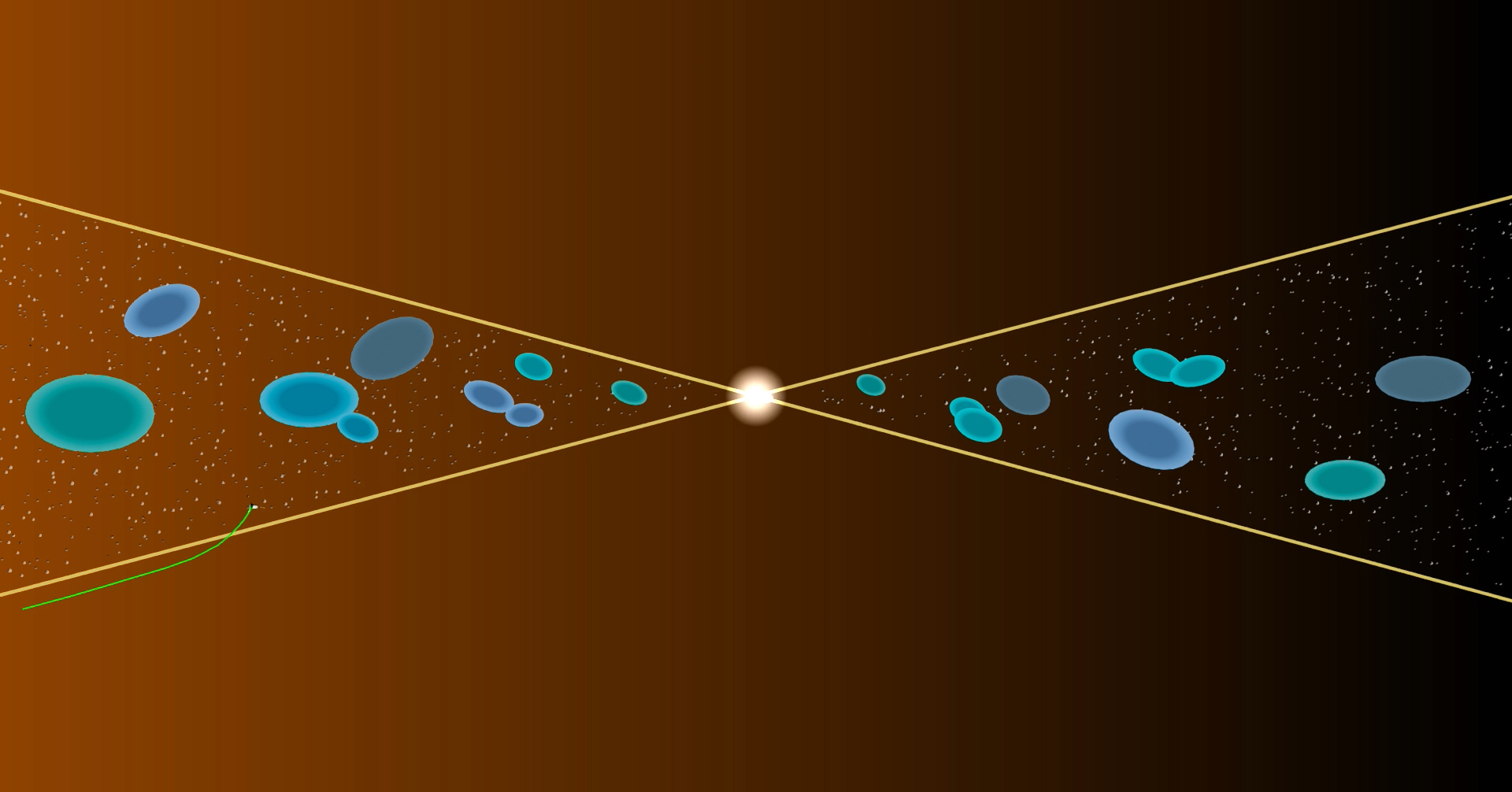

But these quantum superpowers also come with a limitation. All superfluids are more constrained than normal fluids in how they form vortices where fluid circulates around a central point. Any large vortex in a superfluid must be made up of individual smaller vortices, each with a quantized amount of energy.

Despite these major differences from normal fluids, one of the lingering mysteries around superfluids is whether they might, in one way, behave in a surprisingly normal manner. The frictionless flow and unique vortices seem like they should make superfluids break the rules of turbulence, which is the chaotic flow of fluids characterized by unpredictable eddies and vortices. However, prior experiments hint at superfluids following the familiar rules anyway, even though they seem to be lacking a normally crucial ingredient: friction.

In normal fluids, the swirling patterns of turbulence are found in many situations, from liquids flowing in rivers, pipes and blood vessels to the atmospheres shifting over the surfaces of planets to the air passing around airplanes and golf balls. In the early 1940s, the Soviet mathematician Andrey Kolmogorov introduced a theory that describes the statistical patterns common to turbulence and relates them to the way energy moves through different size scales in fluids.

Even though superfluids lack the seemingly crucial ingredient of friction, prior experiments have shown signs that superfluids may experience turbulence that follows rules similar to those described by Kolmogorov. But comparisons have been hampered since superfluid research relies on different tools than experiments studying regular fluids. In particular, superfluid research hasn’t been able to measure velocities at distinct points within a type of superfluid called a Bose-Einstein condensate (BEC). Maps showing the velocity at each point, which physicists call a “velocity field,” are a basic tool for understanding fluid dynamics, but when studying superfluid behaviors, researchers have largely navigated their quantum quirks without that useful guide.

Now, a new technique developed by Joint Quantum Institute researchers has introduced a tool for measuring velocities in a BEC superfluid and applied it to studying superfluid turbulence. In a paper published as an Editors’ Suggestion in Physical Review Letters on February 25, 2025, JQI Fellow and Adjunct Professor Ian Spielman, together with Mingshu Zhao and Junheng Tao, who both worked with Spielman as graduate students and then postdoctoral researchers at JQI, presented a method of measuring the velocity of currents at specific spots in a BEC superfluid made from rubidium atoms. For the technique to work, they had to keep the BEC so thin that it could effectively move in only two dimensions. In the paper, they shared both the first direct velocity field measurements for a rotating atomic BEC superfluid (which wasn’t experiencing turbulence) and an analysis of how the velocities in a chaotically stirred-up superfluid compared to normal turbulence.

The new paper is the culmination of Zhao’s graduate and postdoctoral work at JQI, which was dedicated to developing a way to measure the individual velocities in superfluid currents. Spielman, who was Zhao’s advisor and is also a physicist at the National Institute of Standards and Technology and a Senior Investigator at the National Science Foundation Quantum Leap Challenge Institute for Robust Quantum Simulation, encouraged him to apply the new tool to one of the most challenging problems in the field: quantum turbulence.

“From his first day in the lab Mingshu was interested in developing techniques for measuring the velocity field of a BEC, and after many dead-ends I am really excited that we found a technique that works,” Spielman says.

Previous experiments exploring superfluid turbulence only obtained information about what velocities were present in a superfluid overall, without learning anything about which parts of the superfluid moved at which velocities. Having the bulk data from these measurements is like knowing how many roads in a state have a certain speed limit but not knowing anything about the speed limit on any particular road. Those prior experiments showed signs that superfluids might experience turbulence similar to normal fluids but weren’t enough to settle the question. The measurements could also be compatible with a new form of turbulence requiring its own mathematical description.

To measure velocities at distinct points within a superfluid, Zhao and his colleagues decided to introduce tracers—objects that would move with the superfluid, wouldn’t disrupt its state, and would be easy to spot. Using tracers is like dropping rubber ducks into a stream or scattering confetti in the wind to reveal where the currents flow.

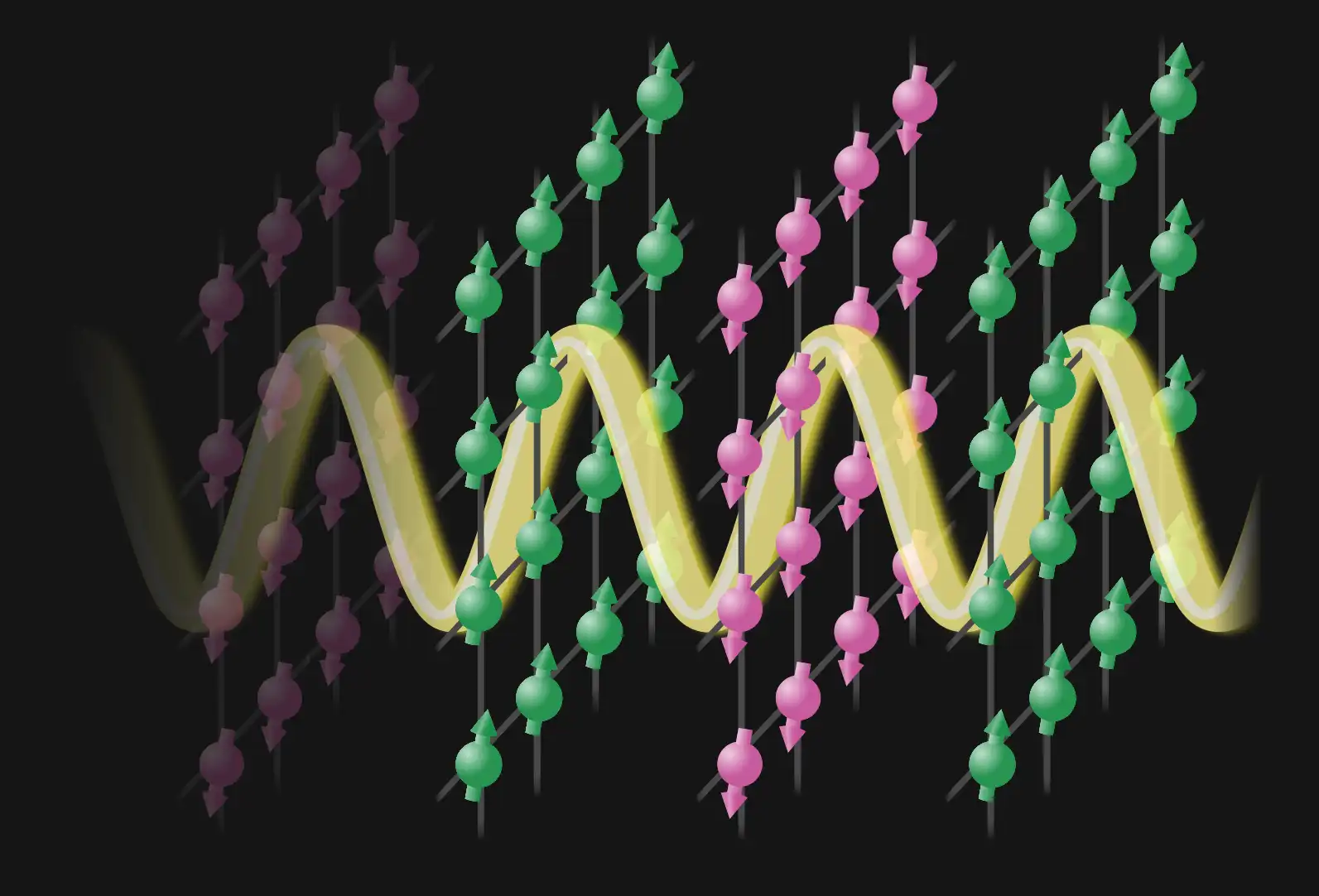

But rubber ducks, confetti and even most tiny things would be impractical in the experiment and disrupt the delicate quantum state of the superfluid. The team realized they didn’t need to introduce something new; everything they needed was already in their experiment. Their innovation was to intentionally knock some of the rubidium atoms in the superfluid into a new quantum state that could be easily detected. Each atom in the BEC acts like a tiny magnet—it has the quantum property of spin—and wants to point along any magnetic field supplied in the lab. By shooting a precisely calibrated laser at sections of the BEC, they could impart enough energy to knock some of the spins of the atoms into pointing in a new direction. These new off-kilter states are called “spinor impurities.”

Spinor impurities work as tracers in the superfluid because they respond differently to light than the rest of the atoms. The team selected a second laser that would pass through the rest of the superfluid but be absorbed by the spinor impurities. When the researchers shone the laser on the superfluid, the shadows cast by the tracers marked their positions.

However, the spinor impurities weren’t perfect tracers. Absorbing the light also knocked the spinor impurities out of the superfluid, so the team only got one chance to check in on each tracer’s journey. During the experiment, this meant they could only get one velocity measurement per tracer. Also, the researchers could use only a limited number of tracers per run and had to check in on them quickly. Each tracer is made of many spinor impurities that naturally diffuse. Instead of behaving like a rubber duck that can be followed indefinitely, the tracers behave more like a drop of food coloring added to swirling water that spreads out as it travels. The team couldn’t wait too long to observe a tracer lest it diffuse into a useless cloud. They also couldn’t pack very many tracers into one experiment as they tended to overlap quickly and become indistinguishable.

So the tracers allowed Zhao and his colleagues to measure velocities at distinct spots, but the researchers couldn’t continuously watch as the tracers followed the currents in real time. To get a complete picture of the velocity field they had to instead take a bunch of snapshots a few points at a time and then combine them into a collage showing the velocity field.

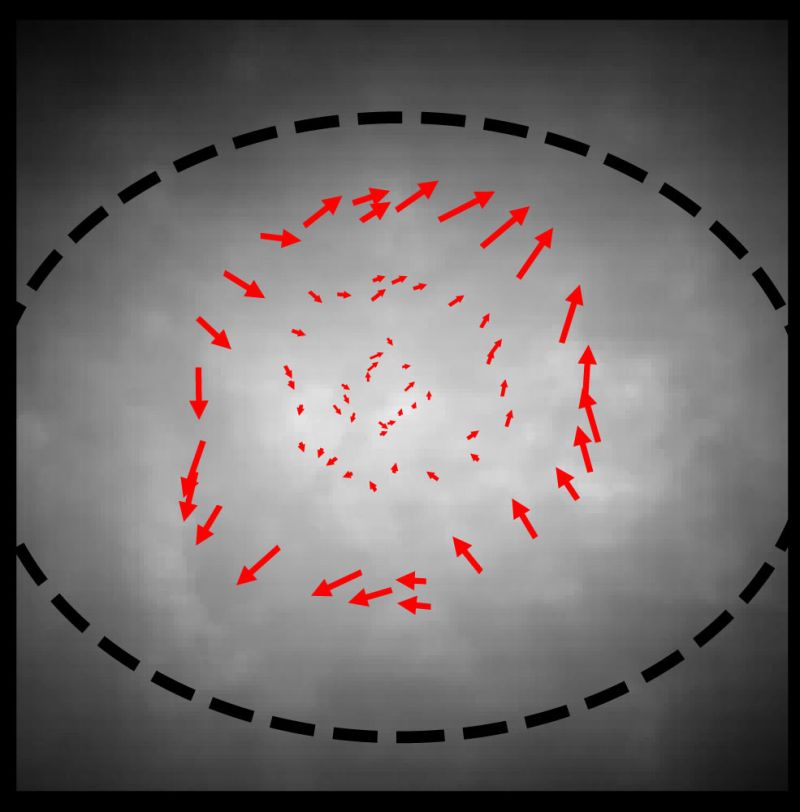

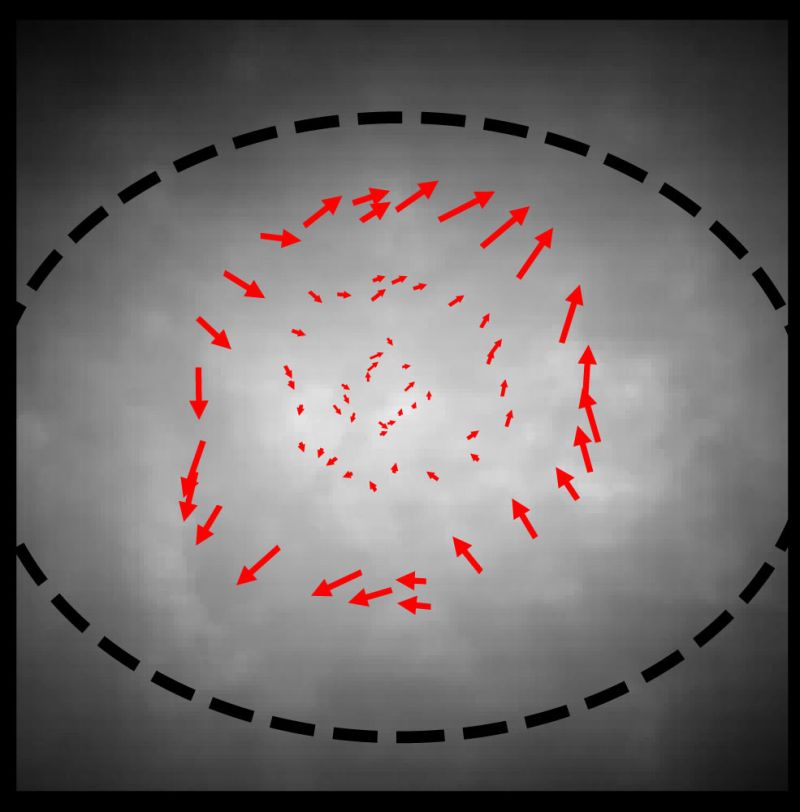

Using just two to four tracers at a time, the team first tested the technique by measuring non-turbulent flow. They spun the superfluid’s container at a slow and steady rate that theory predicted would create a particular current pattern in the superfluid but wouldn’t create a superfluid vortex. Piecing together several measurements gave them an overall view of how the superfluid was flowing. Their results were the first direct visualization of a flow pattern in a rotating atomic BEC superfluid.

An example of a non-turbulent velocity field measured using the new technique. (Credit: Mingshu Zhao, UMD)The same methodical approach can’t work for mapping turbulence. Turbulence is characterized by chaos with currents shifting into new directions, so images from subsequent observations wouldn’t fit together to show continuous currents in a velocity field. The result would just be a mess of unrelated velocities.

An example of a non-turbulent velocity field measured using the new technique. (Credit: Mingshu Zhao, UMD)The same methodical approach can’t work for mapping turbulence. Turbulence is characterized by chaos with currents shifting into new directions, so images from subsequent observations wouldn’t fit together to show continuous currents in a velocity field. The result would just be a mess of unrelated velocities.

Since Zhao and his colleagues couldn’t map out turbulent currents in the BEC, they had to instead resort to statistics describing the relationship of velocity measurements taken at just a couple of points at a time. The randomness of turbulence means that Kolmogorov’s theory relies on a statistical description of how distant velocities tend to be related to each other in turbulent flows and doesn’t provide exact predictions of velocity fields. Despite the velocity varying randomly at every point in turbulence, Kolmogorov still identified a pattern in the average way that the differences in velocities at two points tend to depend on the distance between them. So repeatedly observing just two points at a time and then analyzing them as a group can be enough to check if the velocities might fit Kolmogorov’s traditional description of turbulence.

“Kolmogorov just gives a very good explanation for those statistics in turbulence,” Zhao says. “And to get the statistics, he used a very interesting idea—the energy cascade.”

The cascade of energy describes the flow of energy from large scales down to the smallest scales where it is lost. It arises because whatever stirring, blowing or other source of motion introduces energy into a fluid usually plays out over the largest distances involved in the fluid’s flow, but that energy doesn’t stay at that scale. The energy and motion inevitably transition through an intermediate scale before being lost at the smallest scale where atoms and molecules interact.

The size of the large scale varies from one case to the next and depends on how the motion is introduced. For instance, motion can come in as currents of heat blowing smoke up over a fire, a spoon stirring a teacup or a waterfall crashing into a pool. But the energy and motion don’t stay at that scale; eventually, most of it is lost at a small scale, generally from friction. Ultimately, energy is lost as the moving smoke pulls along calmer cooler air, the tea drags against the teacup and cool air, and the water crashes against rocks and tugs along calmer water. The energy must get from the initial large sweeping scales to the small scales where it is lost, and that transfer occurs at the medium scale where energy moves with almost no loss.

This energy cascade across scales results in vortices and has been observed in a broad array of fluids and situations. Kolmogorov identified the cascade of energy and the statistical description of the resulting turbulent fluid motion.

Sometimes, though, even in regular fluids, things get more complicated. In particular, experiments looking at the rare cases of two-dimensional fluid flows suggest that in addition to the regular energy cascade they experience an inverse energy cascade process. In an inverse energy cascade, some of the energy gets lost at a scale even larger than the scale where it was introduced.

To see what their two-dimensional superfluid did, Zhao and his colleagues needed to stir up currents that might be turbulent. They were able to use laser beams aimed at the flat superfluid as “stirring rods.” Using a precision array of adjustable mirrors, they maneuvered the two lasers around the superfluid. Before introducing the tracers for each measurement, they briefly set the two stirring rods moving in opposite directions, tracing random loops around the superfluid. (Since the two rods were made of light, the researchers didn’t have to worry about them colliding on their random circuits like actual rods or spoons would.)

They took many measurements of velocities two points at a time shortly after stirring the superfluid up. They also measured the superfluid’s density. Combining the density data with their statistical analysis of the how the velocities at different points compared provided them with a new way to compare the superfluid’s behavior to Kolmogorov’s theory. The team’s data matched the theory, but with a twist: It matched what is expected for normal fluid turbulence in three dimensions, despite their superfluid being effectively confined to two.

The result left lingering mysteries. Since superfluids don’t have friction to remove energy at the smallest scales, what produces the turbulence in superfluids? And why does a two-dimensional superfluid behave like normal fluids flowing in three dimensions?

The team speculated that instead of friction, it is the superfluid losing particles that removes energy and creates turbulence. To investigate, Zhao and his colleagues performed numerical simulations where atoms escaped from the experiment and compared it to their results. They found that their data aligned with the simulations, and both were consistent with the superfluid experiencing turbulent flow that matched Kolmogorov’s theory.

The researchers also presented a possible cause of the turbulence of their two-dimensional superfluid resembling that of three-dimensional regular fluids. They argued that the inverse energy cascade in two-dimensional regular fluids requires that the fluid be incompressible—adding pressure won’t pack more fluid into a small space and create extra room. The BEC superfluid used in the experiment can easily be compressed and packed into small areas, unlike water and many normal fluids. That difference likely prevented the inverse cascade and produced the more mundane turbulence like is normally seen in three-dimensional fluids. The researchers also identified an additional constraint on superfluids that was not present in their experiment but might recreate the effect of incompressibility and produce an inverse energy cascade in other two-dimensional superfluids.

“With this experimental method, you can study quantum fluids better than ever,” Zhao says. “With this, we have more information. We have more subjects to study. We can see the statistics better for the turbulence experiments, and we will have a better understanding from that.”

Zhao says he hopes to do further simulations that more realistically show how the dissipation likely occurred in their experiment. However, signs of turbulence have been observed in other superfluids that likely have different dissipation processes that will likely require slightly different explanations. Zhao also hopes that this isn’t the only tool invented for measuring velocities in atomic superfluids since techniques compatible with other types of superfluids and experimental setups could reveal additional physics hiding beneath the surfaces of superfluids.

Original story by Bailey Bedford: https://jqi.umd.edu/news/mysteriously-mundane-turbulence-revealed-2d-superfluid

Prof. Victor Yakovenko, Dr. Ti Xie, and Prof. Cheng Gong. Photo credit: Shanchuan Liang and Dhanu Chettri

Prof. Victor Yakovenko, Dr. Ti Xie, and Prof. Cheng Gong. Photo credit: Shanchuan Liang and Dhanu Chettri